Dave

asked people to share their CSS wish lists for 2023, and even though

it’s well into the second month of the year, I’m going to sprint

wild-eyed out of the brush along the road, grab the hitch on the back of

the departing bandwagon, and try to claw my way aboard.

At first I thought I had maybe four or five things to put on my list,

but as I worked on it, I kept thinking of one more thing and one more

thing until eventually I had a list of (checks notes) sixt — no, SEVENTEEN?!?!? What the hell.

There’s going to be some overlap with the things being worked on for

Interop 2023, and I’m sure

there will be overlap with other peoples’ lists. Regardless, here we

go.

Subgrid

Back in the day, I asserted Grid should wait for subgrid. I was

probably wrong about that, but I wasn’t wrong about the usefulness of

and need for subgrid. Nearly every time I implement a design, I trip

over the lack of widespread support.

I have a blog post in my head about how I hacked around this problem

for wpewebkit.org by applying the

same grid column template to nested containers, and how I could make it

marginally more efficient with variables. I keep not writing it, because

it would show the approach and improvement and then mostly be about the

limitations, flaws, and annoyances this approach embodies. The whole

idea just depresses me, and I would probably become impolitic.

So instead I’ll just say that I hope those browser engines that have

yet to catch up with subgrid support will do so in 2023.

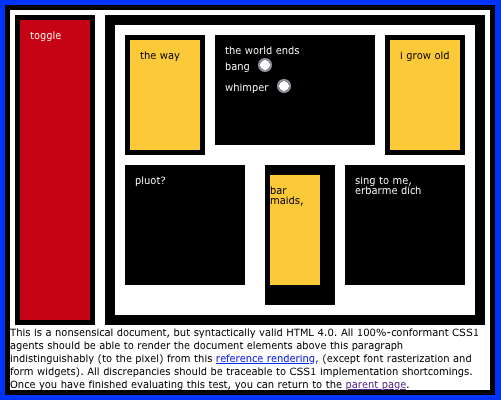

Masonry layout

Grid layout is great, and you can use it to create a masonry-style

layout, but having a real masonry layout mechanism would be better,

particularly if you can set it on a per-axis basis. What I mean is, you

could define a bunch of fixed (or flexible) columns and then say the

rows use masonry layout. It’s not something I’m likely to

use myself, but I always think the more layout possibilities there are,

the better.

Grid track styles

For someone who doesn’t do a ton of layout, I find myself wanting to

style grid lines a surprising amount. I just want to do something

like:

display: grid;

gap: 1em;

grid-rules-columns: 1px dotted red;

…although it would be much better to be able to style separators for

a specific grid track, or even an individual grid cell, as well as be

able to apply it to an entire grid all at once.

No, I don’t know exactly how this should work. I’m an idea guy! But

that’s what I want. I want to be able to define what separator lines

look like between grid tracks, centered on the grid lines.

Anchored positioning

I’ve wanted this in one form or another almost since CSS2 was

published. The general idea is, you can position an element in relation

to the edges of another element that isn’t a containing block. I wrote

about this a bit in my post on connector lines for wpewebkit.org, but

another place it would have come in handy was with the

footnotes on The Effects of Nuclear Weapons.

See, I wanted those to actually be sidenotes, Tufteee-styleee. Right now, in order to make sidenotes, you have to stick the footnote into the text, right where its footnote reference appears — or, at a minimum, right after the element containing the footnote reference. Neither was acceptable to me, because it would dork up the source text.

What I wanted to be able to do was collect all the footnotes as

endnotes at the end of the markup (which we did) and then absolutely

position each to sit next to the element that referenced them, or

have it pop up there on click, tap, hover, whatever. Anchored positioning

would make that not just possible, but fairly easy to do.

Exclusions

Following on anchored positioning, I’d love to have

CSS Exclusions

finally come to browsers. Exclusions are a way to mark an element to

have other content avoid it. You know how floats move out of the normal

flow, but normal-flow text avoids overlapping them? That’s an exclusion.

Now imagine being able to position an element by other means, whether

grid layout or absolute positioning or whatever, and then say “have the

content of other elements flow around it”. Exclusions! See this

article by Rob Weychert for a more in-depth explanation of a common

use case.

Element transitions

The web is cool and all, but you know how futuristic interfaces in

movies have pieces of the interface sliding and zooming and popping out and all that

stuff? Element transitions. You can already try them

out in Chrome Canary, Batman, and I’d love to see them across the

board. Even more, I’d love some gentle, easy-to-follow tutorials on how

to make them work, because even in their single-page form, I found the

coding requirements basically impossible to work out. Make them all-CSS,

and explain them like I’m a newb, and I’m in.

Nested Selectors

A lot of people I know are still hanging on to preprocessors solely

because they permit nested selectors, like:

main {

padding: 1em;

background: #F1F1F0;

h2 {

border-block-end: 1px solid gray;

}

p {

text-indent: 2em;

}

}

The CSS Working Group has been wrestling with this for quite some

time now, because it turns out the CSS parsing rules make it hard to

just add this, there are a lot of questions about how this should

interact with pseudo-classes like :is(), there are serious

concerns about doing this in a way that will be maximally

future-compatible, and also there has been a whole lot of argument over

whether it’s okay to clash with Sass syntax or not.

So it’s a difficult thing to make happen in native CSS, and the

debates are both wide-ranging and slow, but it’s on my (and probably

nearly everyone else’s) wish list. You can try it out in Safari Technology Preview

as I write this, so here’s hoping for accelerating adoption!

More and better :has()

Okay, since I’m talking about selectors already, I’ll throw in

universal, full-featured, more optimized support for

:has(). One browser doesn’t support compound selectors, for

example. I’ve also thought that maybe some combinators would be nice,

like making a:has(> b) can be made equal to

a < b.

But I also wish for people to basically go crazy with

:has(). There’s SO MUCH THERE. There are so many

things we can do with it, and I don’t think we’ve touched even a tiny

fraction of the possibility space.

More attr()

I’ve wanted attr() to be more widely accepted in CSS

values since, well, I can’t remember. A long time. I want to be

able to do something like:

p[data-size] {width: attr(data-width, rem);}

<p data-size="27">…</p>

Okay, not a great example, but it conveys the idea. I also talked

about this in my

post about aligning table columns.

I realize adding this would probably lead to

someone creating a framework called Headgust where all the styling is

jammed into a million data-*attributes and the whole of the

framework’s CSS is nothing but property: attr() declarations

for every single CSS property known to man, but we shouldn’t let that stop us.

Basically I want to be able to do this:

:root {--mobile: 35em;}

@media (min-width: var(--mobile)) {

/* non-mobile styles go here */

}

That’s it. This was made possible in container queries, I believe, so

maybe it can spread to media (and feature?) queries. I sure hope so!

Logical modifiers

You can do this:

p {margin-block: 1em; margin-inline: 1rem;}

But you can’t do this:

p {margin: logical 1em 1rem;}

I want to be able to do that. We should all want to be able

to do that, however it’s made possible.

Additive values

You know how you can set a bunch of values with a comma-separated

list, but if you want to override just one of them, you have to do the

whole thing over? I want to be able to add another thing to the list

without having to do the whole thing over. So rather than adding a value

like this:

background-clip: content, content, border, padding; /* needed to add padding */

…I want to be able to do something like:

background-clip: add(padding);

No, I don’t know how to figure out where in the list it should be

added. I don’t know a lot of things. I just know I want to be able to do

this. And also to remove values from a list in a similar way, since I’m

pony-wishing.

Color shading and blending

Preprocessors already allow you to say you want the color of an

element to be 30% lighter than it would otherwise be. Or darker. Or

blend two colors together. Native CSS should have the same power. It’s being

worked on. Let’s get it done, browsers.

Hanging punctuation

Safari has supported hanging-punctuation forever (where “forever”,

in this case, means since 2016) and it’s long past time for other browsers

to get with the program. This should be universally supported.

Cross-boundary styles

I want to be able to apply styles from my external (or even embedded) CSS to a resource like an external SVG. I realize this sets up all kinds

of security and privacy concerns. I still want to be able to do it. Every time I have to embed an entire inline SVG into a template just so

I can change the fill color of a logo based on its page context, I grit

my teeth just that little bit harder. It tasks me.

Scoped styling (including

imports)

The Mirror Universe version of the previous wish is that I want to be

able to say a bit of CSS, or an entire style sheet (embedded or

external), only applies to a certain DOM node and all its descendants.

“But you can do that with descendant selectors!” Not always. For that

matter, I’d love to be able to just say:

<div style="@import(styles.css);">

…and have that apply to that <div> and its

descendants, as if it were an <iframe>, while

not being an <iframe> so styles from the

document could also apply to it. Crazy? Don’t care. Still want it.

Linked flow regions(?)

SPECIAL BONUS TENTATIVE WISH: I didn’t particularly like how CSS Regions were

structured, but I really liked the general idea. It would be really

great to be able to link elements together, and allow the content to

flow between them in a “smooth” manner. Even to allow the content from

the second region to flow back into the first, if there’s room for it

and nothing prevents it. I admit, this is really a “try to recreate

Aldus PageMaker

in CSS” thing for me, but the idea still appeals to me,

and I’d love to see it come to CSS some day.

So there you go. I’d love to hear what you’d like to see added to CSS, either in the comments below or in posts of your own.