I’ve heard it said there are two kinds of tech power users: the ones who constantly update to stay on the bleeding edge, and the ones who update only when absolutely forced to do so. I’m in the latter camp. If a program, setup, or piece of hardware works for me, I stick by it like it’s the last raft off a sinking island.

And so it has been for my early 2013 MacBook Pro, which has served me incredibly well across all those years and many continents, but was sliding into the software update chasm: some applications, and for that matter its operating system, could no longer be run on its hardware. Oh and also, the top row of letter keys was becoming unresponsive, in particular the E-R-T sequence. Which I kind of need if I’m going to be writing English text, never mind reloading pages and opening new browser tabs.

Stepping Up

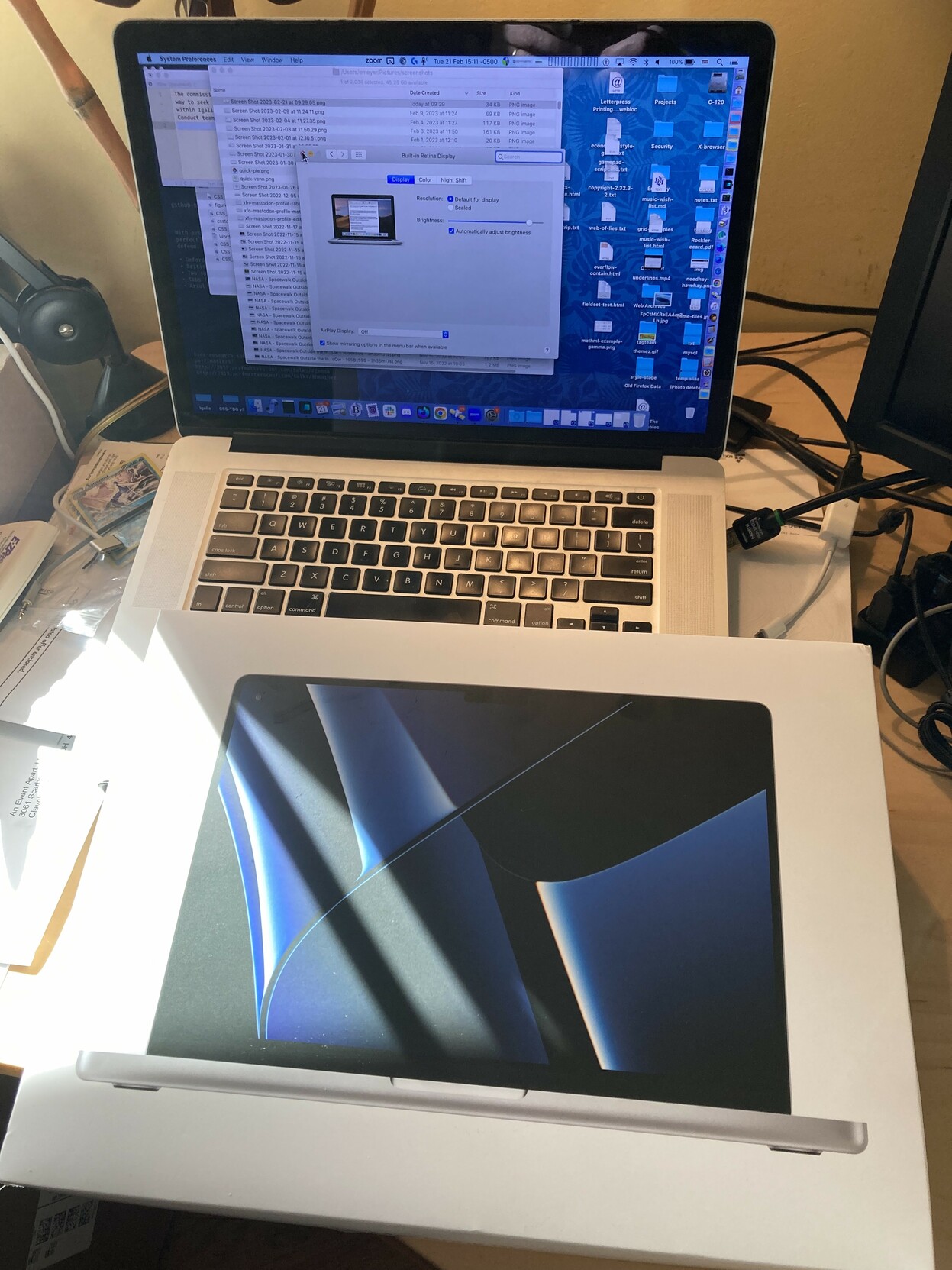

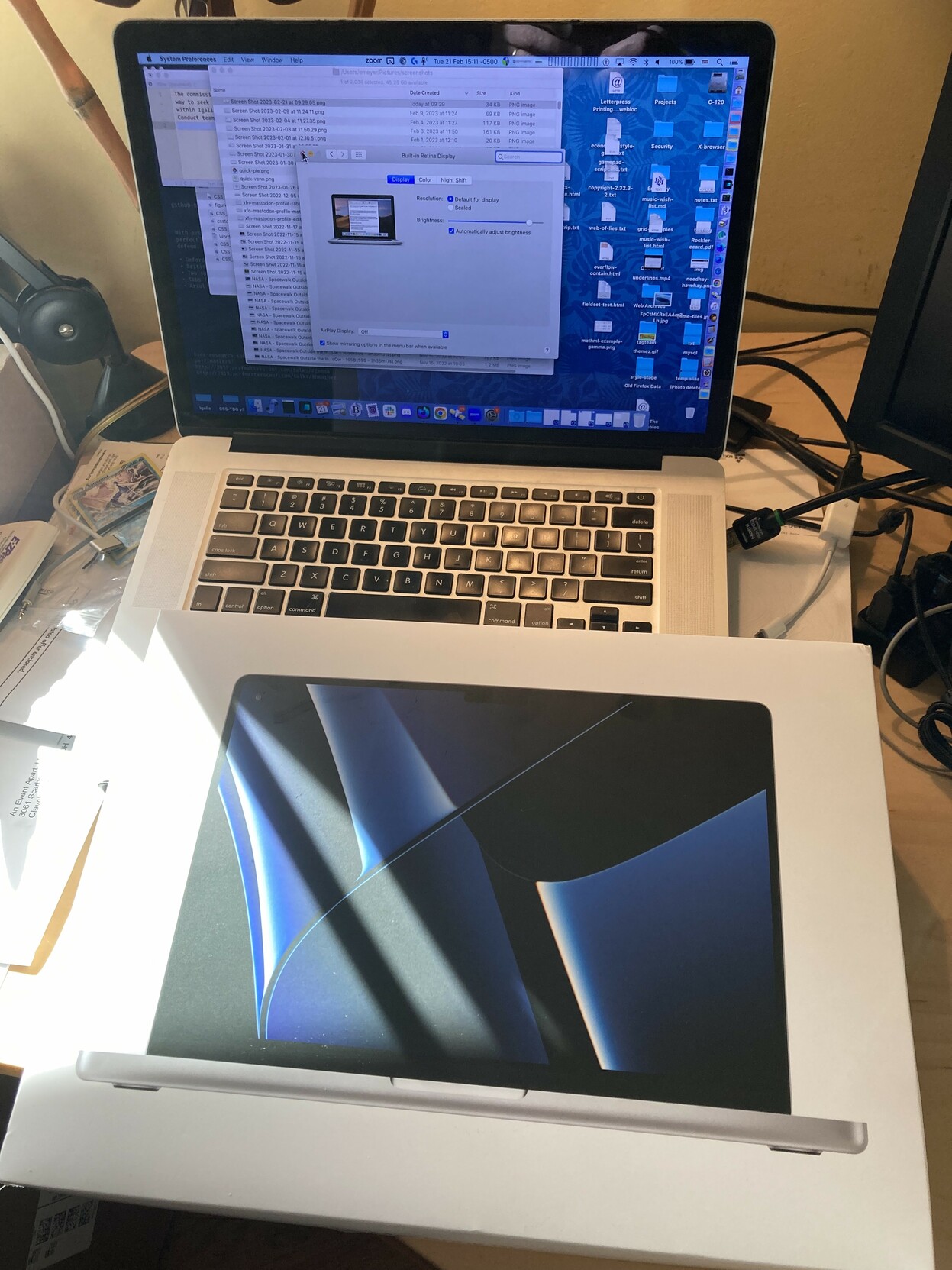

The grizzled old veteran on the verge of retirement and the fresh new recruit that just transferred in to replace them.

The grizzled old veteran on the verge of retirement and the fresh new recruit that just transferred in to replace them.

So on Monday, I dropped by the Apple Store and picked up a custom-built early 2023 MacBook Pro: M2 Max with 38 GPU cores, 64GB RAM, and 2TB SSD. (Thus quadrupling the active memory and nearly trebling the storage capacity of its predecessor.) I went with that balance, or perhaps imbalance, because I intend to have this machine last me another ten years, and in that time, RAM is more likely to be in demand than SSD. If I’m wrong about that, I can always plug in an external SSD. Many thanks to the many people in my Mastodon herd who nudged me in that direction.

I chose the 14” model over the 16”, so it is a wee bit smaller than my old 15” workhorse. The thing that surprises me is the new machine looks boxier, somehow. Probably it’s that the corners of the case are not nearly as rounded as the 2013 model, and I think the thickness ratio of display to body is closer to 1:1 than before. It isn’t a problem or anything, it’s just a thing that I notice. I’ll probably forget about it soon enough.

Some things I find mildly-to-moderately annoying:

- DragThing doesn’t work any more. It had stopped being updated before the 64-bit revolution, never mind the shift to Apple silicon, so this was expected, but wow do I miss it. Like a stick-shift driver uselessly stomping the floorboards and blindly grasping air while driving an automatic car, I still flip the mouse pointer toward the right edge of the screen, where I kept my DragThing dock, before remembering it’s gone. I’ve looked at alternatives, but none of them seem like they’re meant as straight up replacements, so I’ve yet to commit to one. Maybe some day I’ll ask Daniel to teach me Swift to I can build my own. (Because I definitely need more demands on my time.)

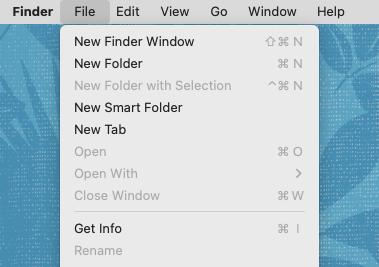

- The twisty arrows in the Finder to open and close folders don’t have enough visual weight. Really, the overall UI feels like a movie’s toy representation of an operating system, not an actual operating system. I mean, the visual presentation of the OS looks like something I would create, and brother, that is not a compliment.

- The Finder’s menu bar has no visually distinct background. What the hell. No, seriously, what the hell? The Notch I’m actually okay with, but removing the distinction between the active area of the menu bar and the inert rest of the desktop seems… ill-advised. Do not like. HARK, A FIX: Cory Birdsong pointed me to “System Settings… > Accessibility > Display > Reduce Transparency”, which fixes this, over on Mastodon. Thanks, Cory!

- I’m not used to the system default font(s) yet, which I imagine will come with time, but still catches me here and there.

- The alert and other systems sounds are different, and I don’t like them. Sosumi.

Oh, and it’s weird to me that the Apple logo on the back of the display doesn’t glow. Not annoying, just weird.

Otherwise, I’m happy with it so far. Great display, great battery life, and the keyboard works!

Getting Migratory

The 2013 MBP was backed up nightly to a 1TB Samsung SSD, so that was how I managed the migration: plugged the SSD into the new MBP and let Migration Assistant do its thing. This got me 90% of the way there, really. The remaining 10% is what I’ll talk about in a bit, in case anyone else finds themselves in a similar situation.

The only major hardware hurdle I hit was that my Dell U2713HM monitor, also of mid-2010s vintage, seems to limit HDMI signals to 1920×1080 despite supposedly supporting HDMI 1.4, which caught me by surprise. When connected to a machine via DisplayPort, even my 2013 MBP, the Dell will go up to 2560×1440. The new MBP only has one HDMI port and three USB-C ports. Fortunately, the USB-C ports can be used as DisplayPorts, so I acquired a DisplayPort–to–USB-C cable and that fixed the situation right up.

Yes, I could upgrade to a monitor that supports USB-C directly, but the Dell is a good size for my work environment, it still looks pretty good, and did I mention I’m the cling-tightly-to-what-works kind of user?

Otherwise, in the hardware space, I’ll have to figure out how I want to manage connecting all the USB-A devices I have (podcasting microphone, wireless headset, desktop speaker, secondary HD camera, etc., etc.) to the USB-C ports. I expected that to be the case, just as I expected some applications would no longer work. I expect an adapter cable or two will be necessary, at least for a while.

Trouble Brewing

I said earlier that Migration Assistant got me 90% of the way to being switched over. Were I someone who doesn’t install stuff via the Terminal, I suspect it would have been 100% successful, but I’m not, so it wasn’t. As with the cables, I anticipated this would happen. What I didn’t expect was that covering that last 10% would take me only an hour or so of actual work, most of it spent waiting on downloads and installs.

First, the serious and quite unexpected problem: my version of Homebrew used an old installation prefix, one that could break newer packages. So, I needed to migrate Homebrew itself from /usr/local to /opt/homebrew. Some searching around indicated that the best way to do this was uninstall Homebrew entirely, then install it fresh.

Okay, except that would also remove everything I’d installed with Homebrew. Which was maybe not as much as some of y’all, but it was still a fair number of fairly essential packages. When I ran brew list, I got over a hundred packages, of which most were dependencies. What I found through further searching was that brew leaves returns a list of the packages I’d installed, without their dependencies. Here’s what I got:

automake

bash

bison

chruby

ckan

cmake

composer

ffmpeg

gh

git

git-lfs

httpd

imagemagick

libksba

lynx

minetest

minimal-racket

pandoc

php

php@7.2

python@3.10

ruby

ruby-install

wget

yarn

That felt a lot more manageable. After a bit more research, boiled down to its essentials, the New Brew Shuffle I came up with was:

$ brew leaves > brewlist.txt

$ /bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/uninstall.sh)"

$ xcode-select --install

$ /bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"

$ xargs brew install < brewlist.txt

The above does elide a few things. In step two, the Homebrew uninstall script identified a bunch of directories that it couldn’t remove, and would have to be deleted manually. I saved all that to a text file (thanks to Warp’s “Copy output” feature) for later study, and pressed onward. I probably also had to sudo some of those steps; I no longer remember.

In addition to all the above, I elected to delete a few of the packages in brewlist.txt before I fed it back to brew install in the last step — things like ckan, left over from my Kerbal Space Program days — and to remove the version dependencies for PHP and Python. Overall, the process was pretty smooth. I just had to sit there and watch Homrebrew chew through all the installs, including all the dependencies.

Cleanup

Once all the reinstalls from the last step had finished, I was left with a few things to clean up. For example, Python didn’t seem to have installed. Eventually I realized it had actually installed as python3 instead of just plain python, so that was mostly fine and I’m sure there’s a way to alias one to the other that I might get around to looking up one day.

Ruby also didn’t seem to reinstall cleanly: there was a library it was looking for that complained about the chip architecture, and attempts to overcome that spawned even more errors, and none of them were decipherable to me or my searches. Multiple attempts at uninstalling and then reinstalling Ruby through a variety of means, some with Homebrew, some other ways, either got me the same indecipherable erros or a whole new set of indecipherable errors. In the end, I just uninstalled Ruby, as I don’t actually use it for anything I’m aware of, and the default Ruby that comes with macOS is still there. If I run into some script I need for work that requires something more, I’ll revisit this, probably with many muttered imprecations.

Finally, httpd wasn’t working as intended. I could launch it with

brew services httpd start, but the resulting server was pointing to a page that just said “It works!”, and not bringing up any of my local hosts. Eventually, I found where Homebrew had stuffed httpd and its various files, and then replaced its configuration files with my old configuration files. Then I went through the cycle of typing sudo apachectl start, addressing the errors it threw over directories or PHP installs or whatever by editing httpd.conf, and then trying again.

After only three or four rounds of that, everything was up and running as intended — and as a bonus, I was able to mark httpd as a Login item in the Finder’s System Settings, so it will automatically come back up whenever I reboot! Which my old machine wouldn’t do, for some reason I never got around to figuring out.

Now I just need to decide what to call this thing. The old MBP was “CoCo”, as in the TRS-80 Color Computer, meant as a wry commentary on the feel of the keyboard and a callback to the first home computer I ever used. That joke still works, but I’m thinking the new machine will be “C64” in honor of the first actually powerful home computer I ever used and its 64 kilobytes of RAM. There’s a pleasing echo between that and the 64 gigabytes of RAM I now have at my literal fingertips, four decades later.

Now that I’m up to date on hardware and operating system, I’d be interested to hear what y’all recommend for good quality-of-life improvement applications or configuration changes. Link me up!