Back in 2023, I belatedly jumped on the bandwagon of people posting their CSS wish lists for the coming year. This year I’m doing all that again, less belatedly! (I didn’t do it last year because I couldn’t even. Get it?)

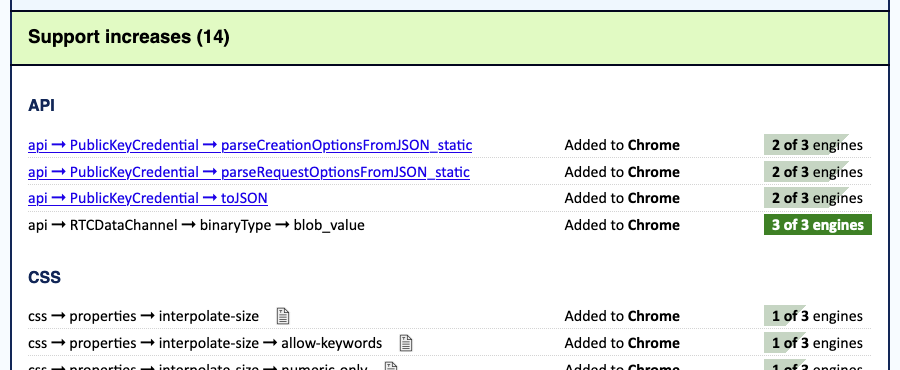

I started this post by looking at what I wished for a couple of years ago, and a small handful of my wishes came true:

Note that by “came true”, I mean “reached at least Baseline Newly Available”, not “reached Baseline Universal”; that latter status comes over time. And more :has() isn’t really a feature you can track, but I do see more people sharing cool :has() tricks and techniques these days, so I’ll take that as a positive signal.

A couple more of my 2023 wishes are on the cusp of coming true:

Those are both in the process of rolling out, and look set to reach Baseline Newly Available before the year is done. I hope.

That leaves the other half of the 2023 list, none of which has seen much movement. So those will be the basis of this year’s list, with some new additions.

Hanging punctuation

WebKit has been the sole implementor of this very nice typographic touch for almost a decade now. The lack of any support by Blink and Gecko is now starting to verge on feeling faintly ridiculous.

Margin and line box trimming

Trim off the leading block margin on the first child in an element, or the trailing block margin of the last child, so they don’t stick out of the element and mess with margin collapsing. Same thing with block margins on the first and last line boxes in an element. And then, be able to do similar things with the inline margins of elements and line boxes! All these things could be ours.

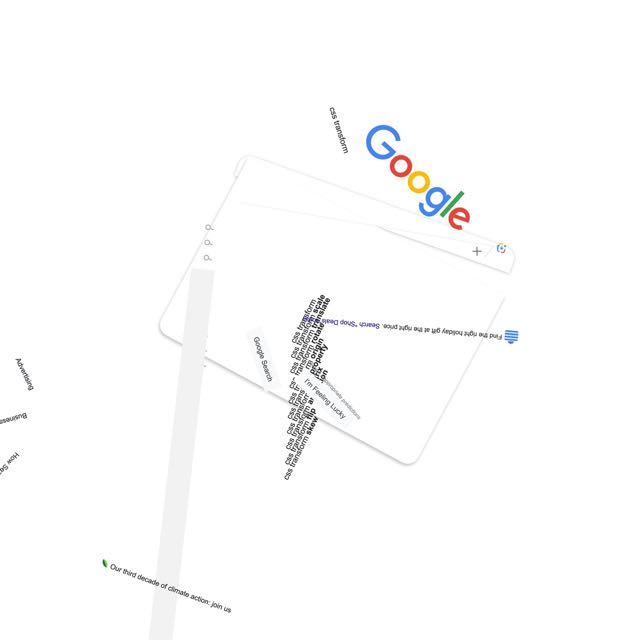

Stroked text

We can already fake text stroking with text-shadow and paint-order, at least in SVG. I’d love to have a text-stroke property that can be applied to HTML, SVG, and MathML text. And XML text and any text that CSS is able to style. It should be at least as powerful as SVG stroking, if not more so.

Expanded attr() support

This has seen some movement specification-wise, but last I checked, no implementation promises or immediate plans. Here’s what I want to be able to do:

td {width: attr(data-size em, 1px));

<td data-size="5">…</td>

The latest Values and Units module describes this, so fingers crossed it starts to gain some momentum.

Exclusions

Yes, I still want CSS Exclusions, a lot. They would make some layout hacks a lot less hacky, and open the door for really cool new hacks, by letting you just mark an element as creating a flow exclusions for the content of other elements. Position an image across two columns of text and set it to exclude, and the text of those columns will flow around or past it like it was a float. This remains one of the big missing pieces of CSS layout, in my view. Linked flow regions is another.

Masonry layout

This one is a bit stalled because the basic approach still hasn’t been decided. Is it part of CSS Grid or its own display type? It’s a tough call. There are persuasive arguments for both. I myself keep flip-flopping on which one I prefer.

Designers want this. Implementors want this. In some ways, that’s what makes it so difficult to pick the final syntax and approach: because everyone wants this, everyone wants to make the exactly perfect right choices for now, for the future, and for ease of teaching new developers. That’s very, very hard.

Grid track and gap styles

Yeah, I still want a Grid equivalent of column-rule, except more full-featured and powerful. Ideally this would be combined with a way to select individual grid tracks, something like:

.gallery {display: grid;}

.gallery:col-track(4) {gap-rule: 2px solid red;}

…in order to just put a gap rule on that particular column. I say that would be ideal because then I could push for a way to set the gap value for individual tracks, something like:

.gallery {gap: 1em 2em;}

.gallery:row-track(2) {gap: 2em 0.5em;}

…to change the leading and trailing gaps on just that row.

This was listed as “Media query variables” in 2023. With these, you could define a breakpoint set like so:

@custom-media --sm (inline-size <= 25rem);

@custom-media --md (25rem < inline-size <= 50rem);

@custom-media --lg (50rem < inline-size);

body {margin-inline: auto;}

@media (--sm) {body {inline-size: auto;}}

@media (--md) {body {inline-size: min(90vw, 40em);}

@media (--lg) {body {inline-size: min(90vw, 55em);}

In other words, you can use custom media queries as much as you want throughout your CSS, but change their definitions in just one place. It’s CSS variables, but for media queries! Let’s do it.

Unprefix all the things

Since we decided to abandon vendor prefixing in favor of feature flags, I want to see anything that’s still prefixed get unprefixed, in all browsers. Keep the support for the prefixed versions, sure, I don’t care, just let us write the property and value names without the prefixes, please and thank you.

Grab bag

I still would like a way to indicate when a shorthand property is meant for logical rather than physical directions, a way to apply a style sheet to a single element, the ability to add or subtract values from a shorthand without having to rewrite the whole thing, and styles that cross resource boudnaries. They’re all in the 2023 post.

Okay, that’s my list. What’s yours?